AI still looks like a software story. It is increasingly becoming an industrial one.

Most of the conversation around artificial intelligence still happens at the model layer. Which model reasons better, which one is cheaper to run, which one gains developer mindshare faster, which one turns into a product sooner. That is where attention goes, and attention usually goes where novelty is most visible.

But that is no longer where the real constraint sits.

The bottleneck is moving underneath the software stack and into the physical system that makes the software possible. Compute capacity, advanced packaging, power availability, grid access, cooling, land, and data center buildouts are becoming the rate-limiting steps. The International Energy Agency now expects global data-center electricity consumption to more than double by 2030 to around 945 TWh, with AI as the most important driver of that increase. That matters because it reframes AI from a pure software expansion into a capital-intensive infrastructure cycle.

That shift changes how the theme should be analyzed. The core question is no longer simply who builds the best model. It is increasingly who can secure, finance, and deploy the physical capacity required to train and serve those models at scale. Microsoft’s capex reached $37.5 billion in its latest reported quarter. Alphabet guided to $175 billion to $185 billion of capex for 2026. Meta guided to $115 billion to $135 billion. Those are not normal software numbers. They are the kind of spending plans you associate with industrial platforms building scarce capacity ahead of demand.

That is the real backdrop for the AI boom now. Demand is moving like software. Supply is arriving like infrastructure.

The real friction is not demand. It is delivery.

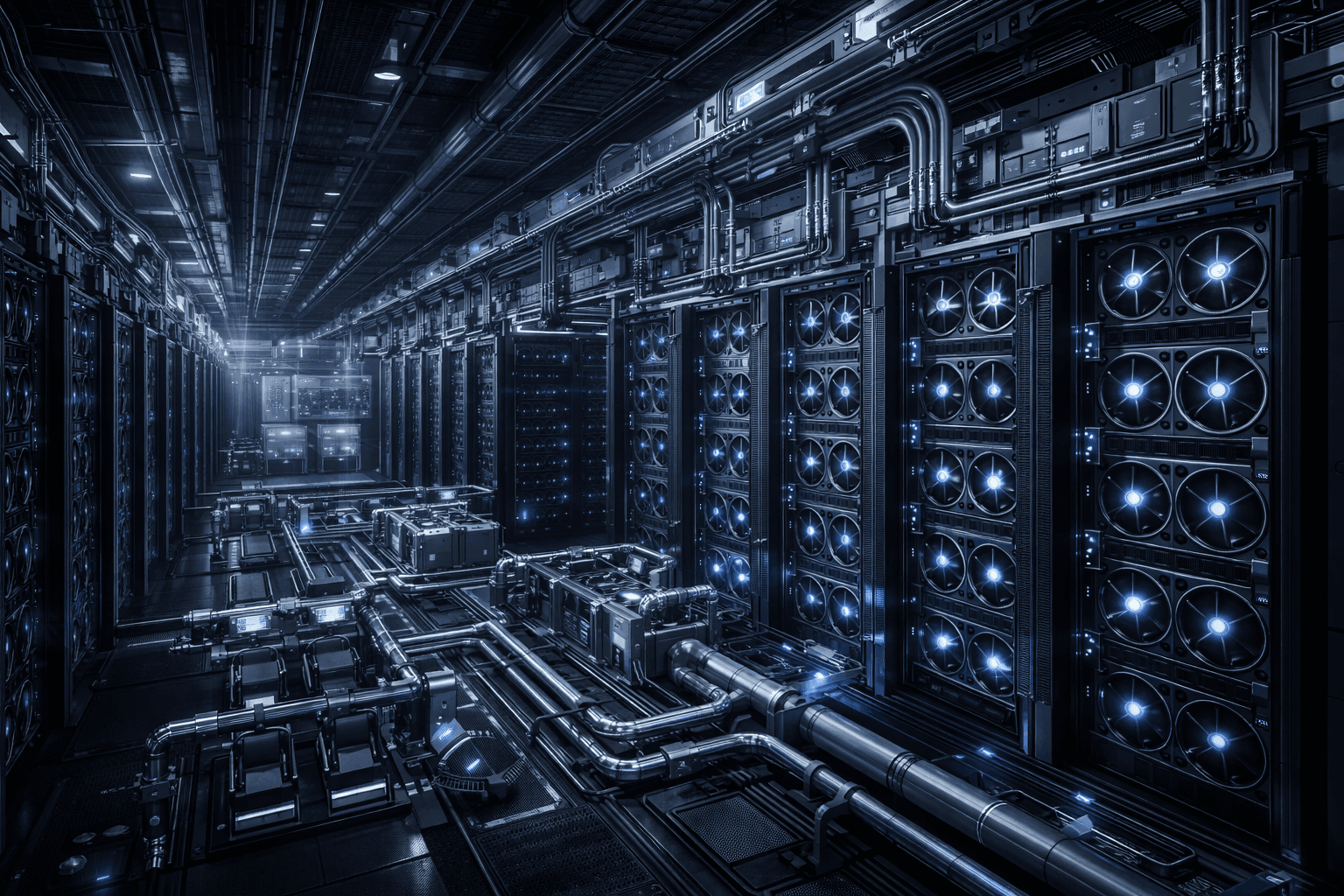

Training frontier models and serving them in production is no longer just a matter of writing better code. It requires dense clusters of accelerators, high-bandwidth networking, advanced packaging, specialized cooling, large power interconnects, and access to grid capacity that often takes years rather than quarters to secure. A modern AI data center is less like a traditional enterprise IT facility and more like a tightly coordinated industrial system.

That distinction matters because software markets usually scale with very little physical friction. Infrastructure markets do not. They face sequencing constraints. You need the chip supply, then the packaging capacity, then the servers, then the rack power, then the cooling system, then the substation, then the transmission access, then the energy contract. A delay in any one layer can hold back the monetization of all the others.

This is why the AI investment cycle now feels both explosive and constrained at the same time. On the demand side, everything is accelerating. On the supply side, everything has lead times.

And infrastructure lead times are rarely forgiving.

What recent developments changed

Recent months have not broken the AI thesis. They have clarified it.

The biggest technology platforms are not behaving as if AI is a short product cycle. They are behaving as if it is a long-duration capacity race. Alphabet’s 2026 capex outlook of $175 billion to $185 billion, Meta’s $115 billion to $135 billion plan, and Microsoft’s latest quarter with $37.5 billion of capex all point in the same direction: the strategic priority is no longer just AI capability, but AI capacity.

The energy side tells the same story. Large technology companies are increasingly trying to lock in long-duration power availability rather than treat electricity as a commodity input. That is an important change in posture. It suggests that power is no longer being managed as a background operating cost. It is being treated as a strategic asset that can determine how quickly new compute can come online. Meta’s latest results explicitly tied higher infrastructure investment to its AI roadmap, and the broader market narrative has shifted toward whether these companies can convert this spending into durable returns.

What really matters here is not that capex is high. Technology companies have always spent aggressively when a platform transition was underway. What matters is that this particular transition is colliding with the physical economy: electricity systems, industrial equipment, supply chains, permitting, and construction timelines.

That is why the AI race now looks less like a typical software land grab and more like a competition to control scarce industrial inputs.

The 10 U.S.-listed stocks most exposed to this theme

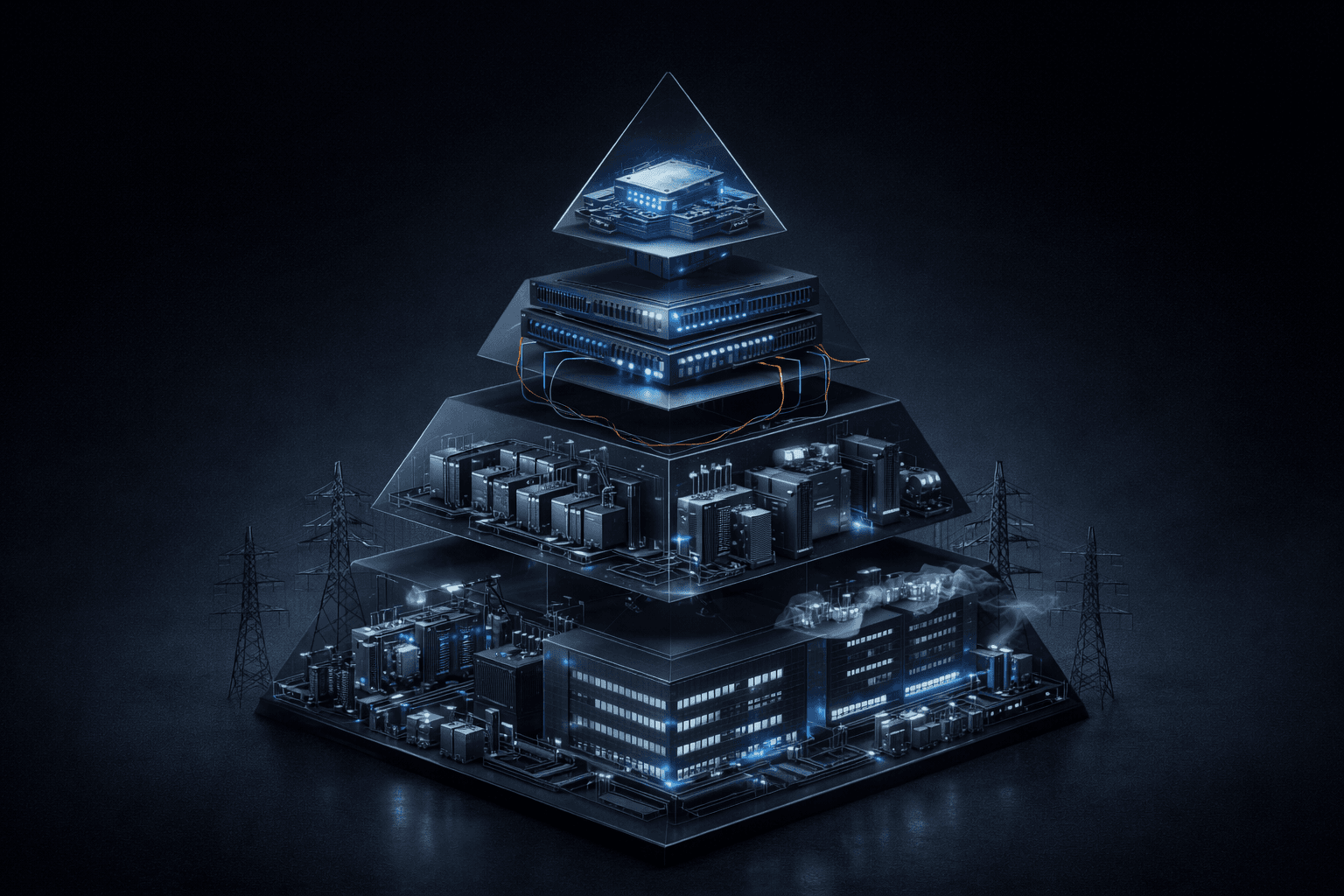

The cleanest way to think about AI infrastructure in public markets is not as a single basket, but as a stack of distinct economic layers. Some companies sit at the heart of compute. Some enable the data center to function. Some provide the power equipment around the facility. Some are exposed to the electricity system itself.

The opportunity is real across the stack. But the return profile is not the same across the stack.

1) NVIDIA (NVDA): the economic center of the compute bottleneck

NVIDIA remains the clearest direct beneficiary of AI infrastructure spending because it still sits at the center of the accelerator layer. That is the most visible bottleneck and, for now, the most valuable one. The company is not just selling chips. It is selling access to scarce compute in the form factor the market most wants.

But the key tension is that NVIDIA’s upside is now constrained less by end demand than by how quickly the broader ecosystem can scale around it. Supply chain readiness, advanced packaging, networking, and customer power availability all matter. This is why NVIDIA can remain one of the best businesses in the stack while still facing a more complex scaling path than the market narrative sometimes implies.

The business can scale enormously. The important nuance is that it may not scale smoothly.

2) AMD (AMD): the strategic value of being the alternative

AMD is often reduced to a simple “second source” story. That is too shallow. In a market where the largest customers increasingly want supply diversification, a credible second platform matters more than it would in a normal semiconductor cycle.

That creates room for AMD even if it does not displace the incumbent at the top of the stack. Its relevance comes not only from product competitiveness, but from buyer behavior. As hyperscalers and enterprise customers try to reduce dependence on one dominant supplier, AMD becomes strategically more important than its current share alone might suggest.

The upside here is less about immediate platform dominance and more about whether the company can translate strategic relevance into sustained adoption.

3) Broadcom (AVGO): the hidden leverage in networking and custom silicon

Broadcom is one of the companies that matters more to the AI buildout than its headline narrative suggests. Large AI clusters are not just compute problems. They are interconnect problems, switching problems, and increasingly custom silicon problems.

That is where Broadcom becomes important. Its exposure is not simply to AI enthusiasm, but to the architecture of scaled AI systems. As clusters grow denser and hyperscalers look for more tailored designs, the company sits in a layer of the stack that is technically indispensable and often underappreciated.

This is the kind of business that does not always dominate the public conversation, but it can still capture a large share of the economics.

4) Arista Networks (ANET): the network matters more than most investors think

AI data centers are forcing investors to think differently about networking. In a conventional enterprise IT setup, networking is essential but often not the primary value driver. In a large-scale AI cluster, network architecture can become part of the bottleneck itself.

That is where Arista stands out. If the next wave of data centers is denser, more latency-sensitive, and more dependent on high-throughput east-west traffic, then network design stops being a secondary consideration. It becomes part of the system’s overall compute efficiency.

The market often talks about GPUs as if they are the whole story. They are not. A weak network can turn expensive compute into underutilized compute. That is why Arista belongs in this discussion.

5) Vertiv (VRT): one of the clearest picks-and-shovels winners

Vertiv sits in one of the most attractive parts of the stack because it benefits directly from AI data-center intensity without needing to predict which model or application wins. Its business is tied to power management, thermal systems, and the physical operability of dense compute environments.

Its recent numbers are a good reminder of how quickly this demand is showing up in the real economy. Vertiv reported roughly 252% organic order growth in the fourth quarter, a striking figure that signals how forcefully AI-driven infrastructure demand is moving into the power-and-cooling layer.

Inference: this is the kind of company that can keep compounding through an AI infrastructure cycle even if sentiment around the model layer becomes more volatile. It sells necessity, not narrative.

6) Eaton (ETN): electrification is becoming a larger pool of value

Eaton is a good example of how AI infrastructure broadens the investable universe beyond obvious technology names. As rack density rises and facilities become more power-intensive, the electrical architecture around the data center becomes a bigger source of value.

The company’s latest results showed strong growth in its Electrical Americas segment, with increasing data-center exposure helping support that strength. That matters because it suggests AI is not only driving demand for semiconductors, but for the industrial systems that make those semiconductors usable in production environments.

This is where the theme becomes more interesting. Eaton is not an AI company in the conventional sense. But it is exposed to one of the most durable consequences of the AI buildout: rising electrification intensity.

7) Quanta Services (PWR): the grid-side beneficiary

Quanta is a reminder that the AI boom ultimately has to interface with the power grid. New data centers require transmission, distribution, interconnection work, and site-level electrical infrastructure. That makes the grid-facing contractors and engineering players more relevant than many investors initially assumed.

This is a different kind of exposure. It is not high-conviction software monetization. It is physical deployment work. But if the AI buildout remains multi-year, that can be a very attractive place to sit. The companies enabling new capacity to connect to the grid may end up with more durable demand than some of the higher-beta names above them in the stack.

That is why Quanta belongs on the list.

8) GE Vernova (GEV): the megawatt side of the AI story

The market has spent a lot of time obsessing over compute scarcity. It is spending more time now on power scarcity. GE Vernova matters because new data-center demand increasingly raises the question of how incremental megawatts are actually supplied.

In the medium term, gas generation remains one of the most practical ways to bring dispatchable power online at scale. That makes companies with exposure to the generation buildout more important to the AI theme than the market once assumed.

The key point is not that GE Vernova is suddenly a technology company. It is that the AI cycle is now large enough to pull generation equipment providers into the same investment conversation.

9) Constellation Energy (CEG): electricity itself is becoming strategic

There is a meaningful difference between talking about energy as a macro input and talking about it as a strategic bottleneck. AI is pushing the conversation toward the latter.

Constellation stands out because reliable, large-scale power supply is becoming more valuable in a world where data-center demand is ramping quickly. If power availability becomes a gating factor for compute deployment, then generation assets with the right reliability profile become more strategically important.

This is one of the clearest examples of the AI theme migrating into sectors that many investors would not have historically treated as part of the same trade.

10) Digital Realty (DLR): physical data-center capacity is its own scarce asset

The last layer investors should not ignore is the facility itself. AI demand is not only a demand shock for chips and power. It is also a demand shock for appropriately located, power-accessible, highly connected data-center real estate.

That makes Digital Realty relevant. Not every data-center operator will benefit equally, and not every facility is suited for high-density AI workloads. But the broad point remains: if AI drives a sustained expansion in physical capacity needs, then data-center real estate becomes more strategic than it looked in the pre-AI period.

This layer may not deliver the same headline growth as accelerators, but it can still capture a meaningful share of the infrastructure economics.

How to think about the stack

One mistake investors make is treating all AI infrastructure exposure as interchangeable. It is not.

NVIDIA, AMD, Broadcom, and Arista are closer to the compute and networking core. That usually means faster growth, higher expectations, and more valuation sensitivity. Vertiv and Eaton sit in the operability layer. They sell the systems that make dense compute environments function in the real world. Quanta, GE Vernova, and Constellation are tied to the power system behind the data center. Digital Realty gives exposure to the physical footprint that hosts it all.

These are not small differences. They define where the economic rent sits, how long demand visibility may last, and what kind of disappointment the market is willing to tolerate.

The higher you go in the stack, the greater the upside can be. But the higher you go, the more perfection tends to be priced in.

The lower you go in the stack, the story often looks less glamorous. But the demand can be more durable precisely because it is tied to physical necessity rather than application buzz.

Market Reaction

The market has become more selective in how it rewards AI exposure. Massive capex plans are no longer treated as automatically bullish simply because they are tied to AI. Investors are increasingly distinguishing between companies that are spending heavily and companies that can plausibly earn high returns on that spending. Amazon’s latest results and 2026 capex outlook reinforced that point: strong demand is not enough on its own if the market is uncertain about the path from infrastructure investment to incremental economics.

Inference: this suggests the market is moving from first-order AI enthusiasm to second-order scrutiny. The question is no longer whether AI demand is real. The question is where the returns will concentrate, and how much capital intensity each business can absorb before valuation discipline reasserts itself.

That is a healthier phase of the cycle, even if it is a less comfortable one.

Macro overlay

Macro matters here, but not in the generic sense.

This is not mainly a rates story, although rates do matter because these are long-duration, capital-heavy investments. The more important macro linkage is industrial capacity: power generation, transmission, utility planning, construction timelines, and equipment availability. In other words, the AI cycle is now partially constrained by the same kinds of bottlenecks that shape large industrial expansions.

That is why the theme increasingly crosses sector boundaries. You cannot understand AI infrastructure by looking only at software demand, semiconductor roadmaps, or cloud growth. You also have to look at electricity systems and capital goods.

When a technology cycle starts pulling the power sector into its orbit, you are no longer dealing with a normal software adoption curve.

Positioning

At this point in the cycle, the most rational framing is probably: high-quality opportunity, but increasingly differentiated risk-reward.

The core compute names still deserve premium attention because they sit closest to the most valuable bottlenecks. But that also means expectations are elevated and execution has less room for error. The hyperscaler layer offers enormous economic potential, though the debate there is shifting toward return on invested capital rather than pure growth. The infrastructure-adjacent names in power, cooling, electrical equipment, and grid buildout may offer a different kind of asymmetry: less narrative glamour, but potentially longer demand duration and lower dependence on which model wins.

That does not make one layer universally better than another. It means investors should stop thinking about “AI infrastructure” as one trade.

It is a stack. And each layer has its own valuation logic, timing risk, and durability profile.

Final synthesis

What did recent developments actually change?

They did not materially change the core thesis. They reinforced it.

The last few months made one thing harder to ignore: AI may present itself as a software revolution, but underneath it is becoming one of the largest physical infrastructure buildouts in modern technology. The capex plans, the power procurement behavior, and the growing attention to electrical and cooling constraints all point the same way. This is no longer just about better models. It is about who can secure the scarce industrial inputs required to run those models at scale.

That distinction matters for investors because infrastructure cycles behave differently from software cycles. They start slower, require more capital, and usually last longer. They also tend to redistribute value across a wider set of companies than the market initially expects.

So the hidden bottleneck of the AI boom is not hidden anymore. The market can see it now.

The real question is where the bottleneck is most persistent, who owns it, and which part of the stack is still underappreciated relative to the duration of the buildout. That is where the asymmetry increasingly lives.

.png)